Along with the technological development, there are more and more tools that can threaten democracy. One of them are applications that use voice cloning (also referred to as deepfake audio). They are cheap and easy to use, and yet difficult to detect.

What is voice cloning?

Voice cloning involves reproducing the timbre of a specific person’s voice using artificial intelligence. Most popular platforms such as ElevenLabs offer this service for a small fee. For less than $1 USD (for the first month) we can clone virtually any voice. All we need is a five-minute sample, which we upload to the ElevenLabs platform, and by entering the text in the appropriate dialog box, we can make any person say any line.

The widespread availability of manuals in Polish and English makes this technology generally accessible to the average Internet user.

Deepfake Audio as a Threat to Democracy

Elections in Slovakia

A few days before the elections in Slovakia, a recording began circulating on social media platforms in which the voices of the leader of the local Progressive Slovakia party Michal Šimeček, and journalist Monika Tódova from Denník N. could be heard. They were allegedly talking about manipulating the election results by buying votes from a marginalised minority group of Roma people.

The recording was quickly flagged as false by the fact-checking organisation AFP, but – crucially – it was released during the pre-election silence, which limited the possibility of public denial by politicians.

The leader of the British opposition, Sir Keir Starmer, fell victim of deepfake audio

Sir Keir Starmer, leader of the British Labour Party, was also a victim of voice cloning. In a recording that appeared on Twitter, he allegedly criticises members of his party and the city of Liverpool in strong words. Both recordings were fakes, but they remain in circulation to this day. The post with the recording on X has already had over 1.5 million views and has still not been marked as manipulated.

Other cases in Europe and around the world

As per Washington Post, according to NewsGuard, 17 identified TikTok accounts used artificial intelligence to create false narratives, generating a total of 336 million views and 14.5 million likes. In recent months, these accounts have been posting AI-generated videos containing false audio. They claimed, for example, that former U.S. President Barack Obama is linked to the death of his personal chef, that Oprah Winfrey is a “sex trader”, or that actor Jamie Foxx was paralysed and blinded by the COVID-19 vaccine. TikTok only removed the materials after being informed of it by NewsGuard.

Voice Cloning in Poland: From Politics to #PandoraGate

In Poland, voice cloning technology has not yet caused any major scandals, but its growing presence and use are controversial and do not go unnoticed in society.

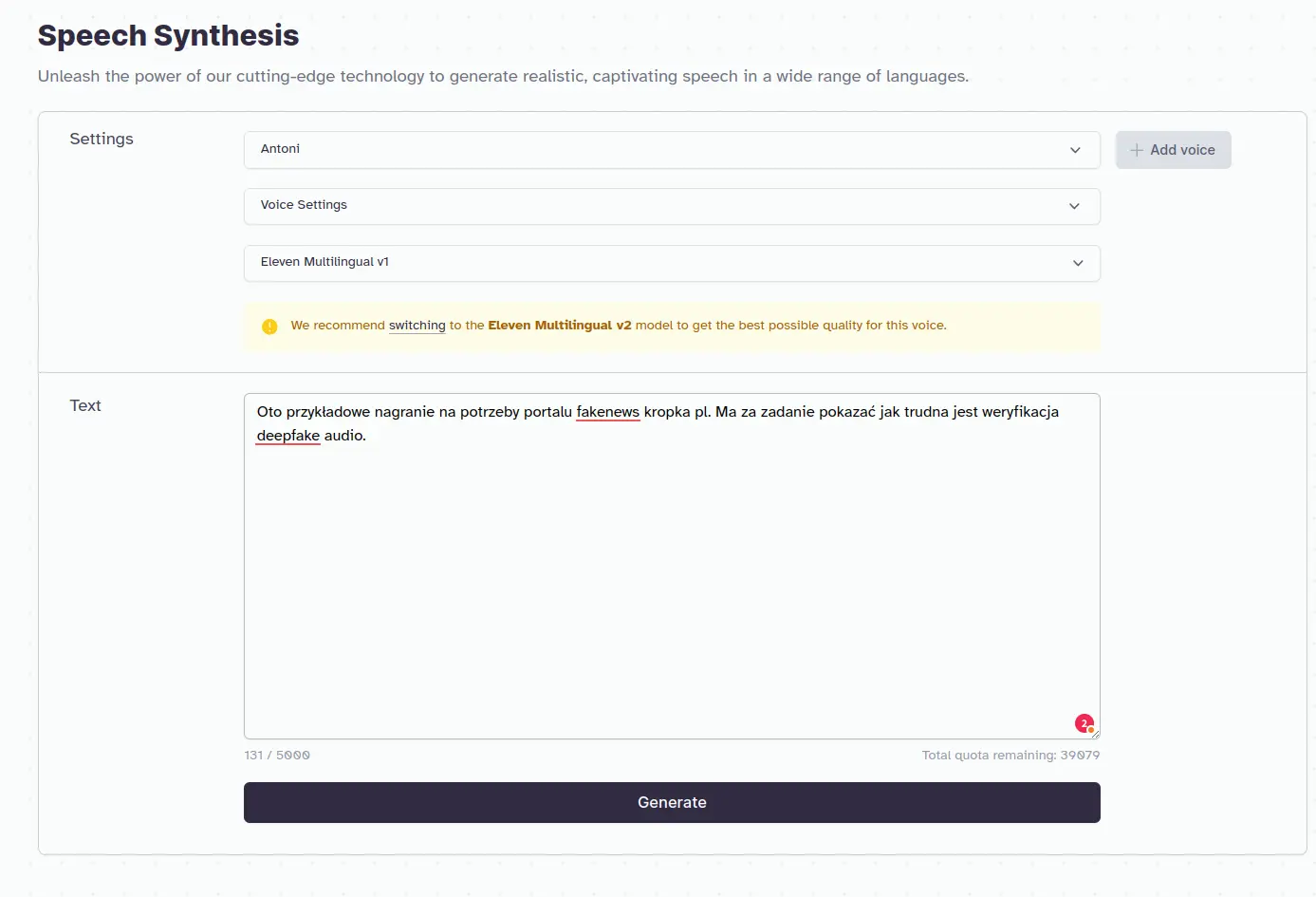

Political experiments: the case of Platforma Obywatelska

As part of one of the campaign spots, Platforma Obywatelska decided to use the cloned voice of Prime Minister Mateusz Morawiecki to show internal tensions in the ruling camp. In the recording, you can hear a voice that is confusingly similar to the voice of the Prime Minister, who is reading leaked e-mails from Michał Dworczyk’s mailbox. The content concerns Zbigniew Ziobro’s disloyalty, which is a contrast to the previously quoted statement by Mateusz Morawiecki, where he claims that the Zjednoczona Prawica camp is “rock solid”.

The surprising experiment sparked a wave of criticism, forcing the party to clarify that the voice was generated using AI. In later spots, Platforma Obywatelska was more transparent and informed that its posts were created using AI.

#PandoraGate: YouTubers, paedophilia and technology

One of the events that shocked Polish public opinion was the scandal related to accusations of paedophilia among famous Polish YouTubers, later called #PandoraGate. Konopskyy, a youtuber who helped expose the scandal, used deepfake audio technology in his video. AI was used to generate the voice of Stuart “Stuu” to better illustrate the topic discussed in the recording. Importantly, Konopskyy clearly and legibly noted that the voices were generated by artificial intelligence.

TikTok, Jarosław Kaczyński and… Minecraft

TikTok, a platform intended for entertainment, has been flooded with fake recordings of various politicians. For instance, they include videos in which Jarosław Kaczyński speaks indiscriminately about his opponents or where he and President Andrzej Duda play Minecraft.

Despite the humorous tone, such cases make us reflect on the potentially serious consequences of voice cloning.

The reaction of social media giants

Social media platforms did not respond adequately to the scale of the problem. In the case of Sir Keir Starmer’s recordings, X (formerly Twitter) did not include any warning that the recording might be manipulated. It is still available on the website and generates disinformation. Meta and Google are said to be working on solutions to help mark content as generated by AI, but no results are visible yet. At this point, the entire problem is placed on the shoulders of fact-checking organisations and the media. It was fact-checkers who flagged Michal Šimeček’s video as being manipulated. Facebook’s role was marginal because the recording was only specifically marked and not deleted. It can still be viewed and listened to, and with a little effort, downloaded and distributed.

TikTok is also to work on tools that automatically classify AI-generated content. At the moment the process is only done manually. The giant removes AI-based manipulations in the case of very popular videos when a public figure makes a claim. This was the case of Tom Hanks, whose voice and face were used to advertise dental products without his knowledge or consent, or the deepfake of Mr. Beast, who advertised an iPhone scam.

Detecting deepfake audio

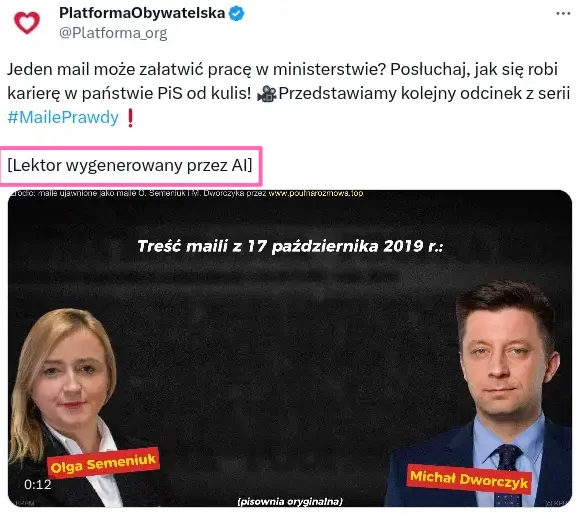

Unfortunately, there is currently a lack of effective tools to detect AI-generated voices, which makes voice cloning difficult to detect. For example, ElevenLabs has provided a classifier that is supposed to detect whether a given recording is generated by ElevenLabs or manipulated. However, adding some noise in the background (e.g. birds singing or car sounds) is enough to make the tool completely unreliable. Here is an example:

Using ElevenLabs, we generated a simple recording based on one of many available voice synthesisers. Recording below:

Results:

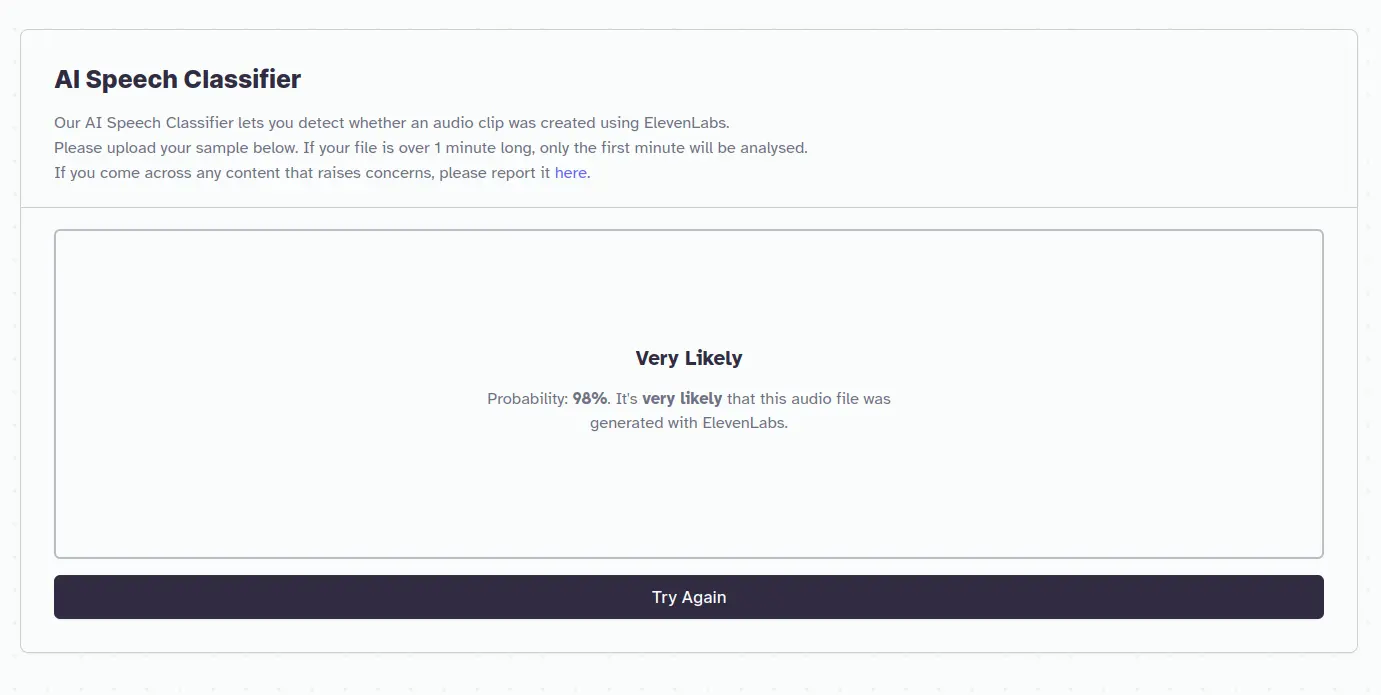

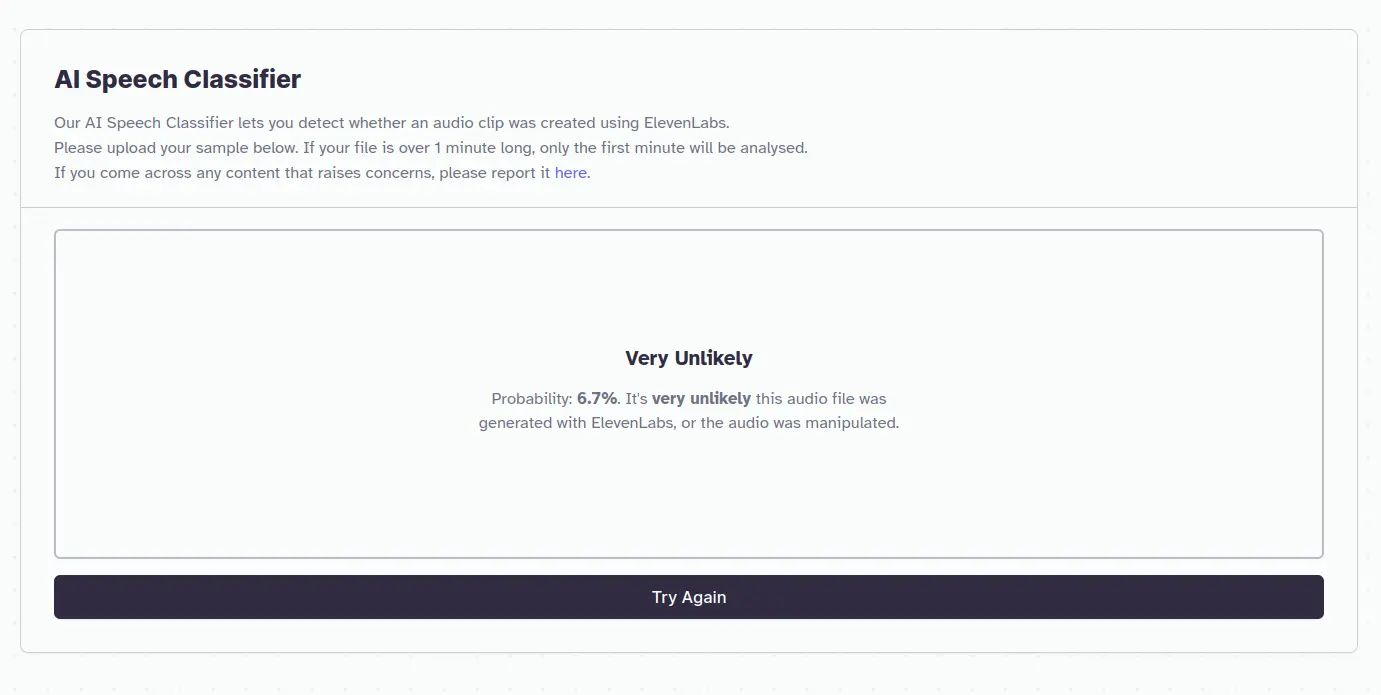

This means that the classifier correctly recognised that the audio was generated by AI. However, once we added sounds in the background, birds singing, car sounds, etc., the result was completely different:

Results:

All we took was adding some extra sounds, and the ElevenLabs classifier returned information that the probability of being generated by AI drops from 98% to 6.7%.

It took us 2 minutes to modify the sound file. This little experiment shows how difficult it is to detect this type of manipulation. It is obvious that potential disinformation actors will distort recordings to make them difficult to verify, which will make currently available classifiers useless.

Summary

Voice cloning technology is developing at a rapid pace and creating new challenges for democracy and the reliability of media messages. At this point, only a joint effort of the media, fact-checking organisations and social media platforms can form a minimal barrier to slow down the spread of disinformation in this form. Let’s hope that in the near future, technological solutions that are not currently available to the public will also help with combating this significant issue.

Sources:

The Washington Post: https://www.washingtonpost.com/technology/2023/10/13/ai-voice-cloning-deepfakes/

Wired: https://www.wired.co.uk/article/slovakia-election-deepfakes

AFP: https://fakty.afp.com/doc.afp.com.33WY9LF

DIG.watch: https://dig.watch/updates/meta-platforms-develops-labels-for-ai-generated-content

Wirtualnemedia.pl: https://www.wirtualnemedia.pl/artykul/pandora-gate-sylwester-wardega-stuu-boxdel-dubiel-fagata-pedofilia

Factcheckhub: https://factcheckhub.com/ai-voice-technology-used-to-create-misleading-videos-on-tiktok-report/